Only 31% of projects succeed, and a significant share of failures trace directly back to poor risk management. Yet in many organisations, risk analysis is still treated as a formality—a register filled in at kick-off and rarely revisited. That mindset is costly. Effective project risk analysis is an ongoing discipline that protects your objectives, your budget, and your team's credibility. This guide walks you through the core methods, leading frameworks, advanced concepts, and the growing role of AI, so you can build a risk practice that genuinely works rather than one that simply looks good on paper.

Table of Contents

- What is project risk analysis?

- Key methods explained: Qualitative and quantitative analysis

- Frameworks and standards: PMBOK, PRINCE2, and evolving practices

- Advanced risk concepts: Inherent vs. contingent risks and complexity

- AI-assisted project risk analysis: Next-generation techniques

- The uncomfortable truth about project risk analysis: It's not the framework, it's the culture

- Strengthen your project outcomes with Pocket PMO

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Rigorous risk analysis matters | Robust risk analysis prevents costly project failures and delivers better outcomes. |

| Blend qualitative and quantitative | Combining subjective scoring with numerical modelling gives a clearer risk picture. |

| Iterate throughout the lifecycle | Effective risk analysis is an ongoing, adaptive process—not just a single event. |

| Embrace advanced and AI tools | AI-driven methods enable predictive insights, but must be matched with expert oversight. |

| Culture trumps process | A risk-aware culture delivers bigger performance gains than methods alone. |

What is project risk analysis?

Project risk analysis is the structured process of identifying, assessing, and prioritising risks that could affect your project's objectives. Its core aim is simple: reduce uncertainty so you can make better decisions. But the execution is often where teams fall short.

At its most fundamental level, risk analysis answers three questions: What could go wrong? How likely is it? What would the impact be? From those answers, you build a picture of where to focus your attention and resources.

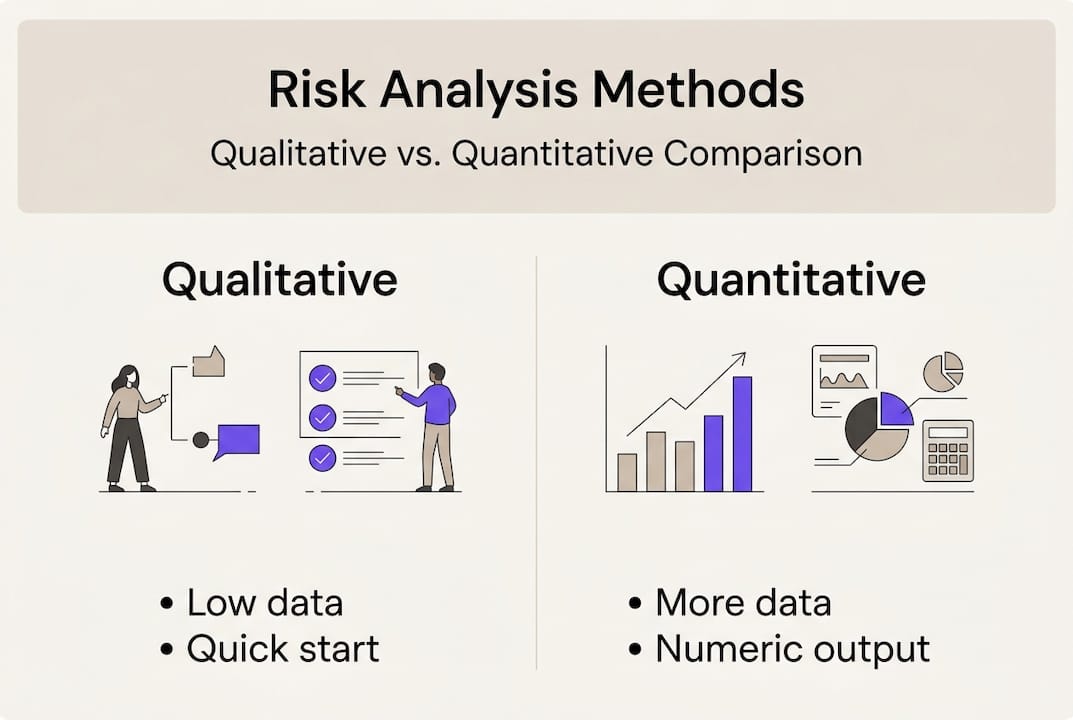

There are two primary approaches:

- Qualitative risk analysis uses expert judgement, probability-impact matrices, and heat maps to score risks subjectively. It is fast, accessible, and works well when data is limited.

- Quantitative risk analysis applies numerical methods such as Monte Carlo simulation and decision trees to model risk in terms of cost, schedule, or probability ranges. It requires more data but delivers more precise forecasts.

Modern risk analysis increasingly blends both approaches and layers in AI to process historical project data, flag emerging patterns, and generate scenario forecasts in real time. Strategies such as AI project tracking are reshaping how teams detect and respond to risk before it escalates.

One of the most persistent misconceptions is that risk analysis is a one-off task. You complete it during planning, file it away, and move on. This is precisely the habit that leads to costly surprises mid-delivery. Risk is dynamic. New risks emerge as your project evolves, and previously identified risks change in probability and impact. Treating analysis as a continuous activity, not a milestone checkbox, is what separates high-performing teams from the rest.

"Risk analysis is not a stage in the project—it is a thread running through every stage."

Building that mindset into your team culture is as important as selecting the right analytical method.

Key methods explained: Qualitative and quantitative analysis

Understanding the 'what' of project risk analysis, let's look at the 'how' in practice.

Qualitative methods are the starting point for most projects. They include:

- Probability-impact matrices: Score each risk on likelihood and consequence, then plot it on a grid.

- Heat maps: Visualise risk concentration across your portfolio or workstreams.

- Expert scoring and workshops: Gather structured input from your team and stakeholders to surface risks you might not see from data alone.

Quantitative methods go further by attaching numbers to uncertainty. Expert judgement informs qualitative work, while probabilistic methods and simulations drive quantitative outputs. Common techniques include Monte Carlo simulation, sensitivity analysis, and decision tree modelling.

| Feature | Qualitative analysis | Quantitative analysis |

|---|---|---|

| Data requirement | Low | High |

| Speed | Fast | Slower |

| Output | Risk ranking/priority | Probability ranges, cost impact |

| Tools needed | Matrices, workshops | Simulation software, statistical models |

| Best for | Early-stage, data-light projects | Complex, high-value, data-rich projects |

Choosing the right method depends on three factors: project complexity, available data, and your timeframe. A small internal initiative benefits from a well-run qualitative workshop. A multi-year infrastructure programme demands quantitative modelling to justify contingency budgets and satisfy governance boards.

For teams managing multiple concurrent projects, the challenge is applying proportionate rigour across each, without overwhelming your PMO capacity.

Pro Tip: Never rely solely on one method. On complex projects, start with qualitative analysis to surface and rank risks, then apply quantitative techniques to the top-tier items. The combination gives you speed and precision where it matters most.

Frameworks and standards: PMBOK, PRINCE2, and evolving practices

With the foundational methods covered, let's clarify how global frameworks shape practical risk analysis.

PMBOK 8 represents a significant shift. Rather than treating qualitative and quantitative analysis as separate, sequential processes, PMBOK 8 unifies them into a single iterative loop that runs throughout the project lifecycle. This reflects real-world practice: risk analysis should not stop after planning.

PRINCE2 takes a structured theme-based approach. Its risk theme defines:

- Risk appetite and tolerance levels set by the project board.

- Mandatory risk registers and regular review checkpoints.

- Clear ownership of each risk, with defined responses and fallback positions.

- Integration with the project's exception process, so escalation is automatic when tolerances are breached.

| Aspect | PMBOK 8 | PRINCE2 |

|---|---|---|

| Analysis style | Iterative, unified process | Structured theme with formal reviews |

| Risk ownership | Risk owner per risk | Explicitly assigned per register entry |

| Escalation mechanism | Change requests and replanning | Exception reports and board escalation |

| Iteration frequency | Continuous, per performance domain | Defined stage boundaries and ad hoc |

Both frameworks agree on one critical point: risk analysis is not a one-time deliverable. Adapting your analysis throughout the project lifecycle measurably improves outcomes. Teams that revisit their risk registers at regular intervals, not just at stage gates, consistently identify emerging threats earlier and respond more effectively.

The practical takeaway is to embed risk reviews into your regular project rhythm. Sprint retrospectives, monthly steering meetings, and change control processes are all natural homes for a brief but focused risk update. You can explore how Pocket PMO supports both approaches on the use cases page.

Advanced risk concepts: Inherent vs. contingent risks and complexity

Beyond frameworks, complex projects demand more nuanced approaches.

Inherent risks are built into the very fabric of your estimates and plan. They exist regardless of any mitigating action. A software migration project, for example, inherently carries the risk of data corruption. You cannot eliminate it entirely; you manage it through design and process.

Contingent risks, by contrast, only materialise if a specific condition is met. A supply chain delay becomes a real risk only if your primary supplier fails to deliver. Inherent risks sit within estimates; contingent risks cover a wider spectrum of conditional scenarios that require separate contingency budgets and trigger-based response plans.

Why does the distinction matter? Because misclassifying a contingent risk as inherent leads to over-padded baseline estimates, while treating an inherent risk as contingent leaves your project exposed with no structural response.

Signs your project needs to distinguish between these risk types:

- Your contingency budget is being drawn on regularly for base-case activities.

- Risk owners cannot articulate clear triggers for when a risk becomes an issue.

- Your risk register mixes operational uncertainties with conditional threat scenarios.

- Senior stakeholders question why contingency reserves are consumed before project midpoint.

Large, complex portfolios also introduce nonlinear risk interactions. Traditional linear analysis assumes risks are independent. In reality, one risk event can amplify another, cascading in ways a simple probability-impact matrix will not capture. For complex project modelling, this is where advanced techniques become essential.

Pro Tip: For high-complexity programmes, apply advanced Monte Carlo modelling with correlated risk variables, or use system dynamics to map risk interdependencies. This reveals cascade scenarios that simpler tools miss entirely.

AI-assisted project risk analysis: Next-generation techniques

Risk analysis is evolving rapidly thanks to AI. Here is what you need to know to stay ahead.

AI brings three core capabilities to project risk analysis: real-time risk forecasting, early pattern detection, and accelerated scenario modelling. AI-assisted analysis combines Monte Carlo simulation with machine learning algorithms to produce predictive models that learn from historical project data. The result is a risk analysis engine that improves with every project it processes.

Here is how to integrate AI tools into your risk process effectively:

- Gather and structure your data. AI tools are only as good as the data fed into them. Consolidate historical project records, risk registers, and issue logs into a consistent format before training any model.

- Define your risk indicators. Identify the leading signals in your project data, schedule variance, resource utilisation trends, change request volume, that precede risk events. These become your model's input features.

- Train and validate your model. Run the AI against historical data to test its predictive accuracy. Validate outputs against known project outcomes before applying it to live projects.

- Iterate continuously. Feed new project data back into the model after each delivery. AI risk tools improve through iteration, so the longer you use them, the sharper the predictions become.

"Predictive analytics doesn't remove uncertainty—it narrows it, giving project teams the clarity to act earlier and more confidently."

One pitfall to watch: overreliance on AI-assisted project tracking without maintaining human context. Tools process patterns in data, but they cannot interpret organisational politics, stakeholder dynamics, or strategic shifts. AI works best as a decision support layer, not a replacement for experienced judgement.

The uncomfortable truth about project risk analysis: It's not the framework, it's the culture

After years of supporting project teams across complex programmes, one pattern stands out above all others. The teams that struggle with risk analysis are rarely struggling because they chose the wrong framework or missed a Monte Carlo run. They struggle because of entrenched habits: risk registers updated under pressure, reviews that rubber-stamp previous assessments, and a team culture where raising concerns feels career-limiting.

Organisations with mature risk cultures succeed at 2.5 times the rate of those relying on checklists alone. That statistic is striking, but it should not surprise any experienced PMO professional. Frameworks and tools create the conditions for good risk management. Culture determines whether those conditions are used.

Real improvement comes when risk reviews become genuine cross-functional conversations. When delivery leads, finance partners, and technical architects sit in the same room and challenge each other's assumptions openly, you surface the risks that no spreadsheet ever captures. Building a risk-aware project culture means making it psychologically safe to say "I think we have a problem here" at any stage of delivery.

Make your risk reviews open, iterative, and cross-functional. That is where the real value lives.

Strengthen your project outcomes with Pocket PMO

If this guide has reinforced one thing, it is that effective risk analysis requires the right tools working alongside the right habits. Pocket PMO is built precisely for that combination.

The platform brings together AI-driven risk analysis, real-time dashboards, and intelligent automation so your PMO can detect, assess, and respond to risks proactively. Whether you are running a single critical project or managing a complex multi-project portfolio, Pocket PMO gives you the visibility and decision-making confidence to stay ahead of issues rather than react to them. Ready to put it into practice? Launch your PMO with AI today, or take a closer look at what is possible when you explore key features built for modern risk management.

Frequently asked questions

What are the main differences between qualitative and quantitative project risk analysis?

Qualitative analysis prioritises risks using matrices and scoring scales based on expert judgement, while quantitative methods use numerical simulations and probabilistic models to forecast measurable outcomes.

How does AI improve project risk analysis?

AI integrates Monte Carlo simulation with machine learning to deliver real-time risk forecasting, pattern detection, and more accurate scenario planning using accumulated historical project data.

Why should risk analysis be iterative rather than one-off?

PMBOK 8 recommends iterative analysis across the full project lifecycle because risks evolve continuously, and regular reviews allow teams to catch emerging threats before they become costly issues.

What are the risks of overrelying on tools for analysis?

Tools require expert interpretation to be effective; relying on outputs without applying human judgement and organisational context can create blind spots that no algorithm will catch on its own.